Vibe coding and production: a survival manual for IT decision-makers

By a Senior Product Manager, practitioner of AI-assisted production

Preamble: dispelling a semantic misunderstanding

First and foremost, it is worth clarifying a term that causes confusion in executive circles as much as on technical floors. Vibe coding is not synonymous with intensive use of artificial intelligence to generate code. Using Cursor, Copilot, or Claude to write the bulk of your lines of code while remaining in a tight feedback loop with the model, reviewing, validating, and correcting each suggestion, is now a commonplace practice of development assistance. That is not vibe coding.

The authoritative definition, formulated by Andrej Karpathy, is far more radical: vibe coding means giving yourself over to the vibes, embracing the exponential, and forgetting that code exists. The key phrase, the one that every decision-maker should pay attention to, is the last one: forgetting that code exists.

This paradigm shift is not a cosmetic detail. It redefines the very nature of the relationship between the engineer and their artifact, and consequently transforms the governance of the information systems you oversee.

Why vibe coding should matter to IT leaders

The initial democratization of vibe coding produced mixed results. In the success column, we find video games, prototypes, and personal projects — objects where failure is tolerable. In the disaster column, we find applications launched into production by novices, with their predictable trail of consequences: exposed API keys, bypassed authentication systems, shattered quotas, databases polluted by reckless injections. Vibe coding thus seemed confined to recreational use or, at best, exploratory purposes.

So why, as an IT decision-maker, should you concern yourself with a practice apparently reserved for amateurs or low-stakes prototypes?

The answer comes down to one word: the exponential.

The length of tasks that AI can accomplish autonomously doubles every seven months. At this rate, there is no need to vibe code: you can let your engineer drive Claude Code or Cursor on a feature, then review the result in full. The cost of verification remains reasonable relative to the productivity gain.

But let us project forward. In twelve months, in twenty-four months, when these systems produce the equivalent of an entire day's work, then a full week's work, in a single inference, it will become materially impossible to remain in lockstep with the machine. The engineer who insists on reading every line becomes the bottleneck of their organization. The refusal to adapt will be paid for not in technical debt, but in competitiveness.

The foundational analogy: compilers

To grasp what is at stake, a historical detour is in order. At the dawn of compilers, many developers did not trust them. They used the compiler, certainly, but systematically reviewed the produced assembly to ensure it matched what they would have written themselves. This practice, legitimate during an acculturation phase, quickly became untenable: systems grew too large for an exhaustive review of assembly to remain economically justifiable.

Today, we all know there is assembly under the hood. But virtually no application engineer reads it. We build robust software without inspecting that layer. We have found verifiable levels of abstraction that spare us from inspecting the substrate.

The thesis, for decision-makers, is as follows: we will forget that code exists, but we will never forget that the product exists. The challenge of the coming years is to build the methodological conditions for this delegated trust, exactly as we collectively built the conditions for trusting compilers.

A problem as old as civilization

It is worth emphasizing a point that engineers, as individual contributors by culture, often struggle to accept: managing expertise one does not personally master is one of the oldest problems in human organization.

- How does a technical director oversee an expert in a domain where they are not themselves an expert?

- How does a product manager validate a feature without reading all the underlying code?

- How does an executive verify their accountant's work without being a certified accountant themselves?

These questions have well-tested answers, sometimes centuries old:

- The CTO writes acceptance tests that validate the expected behavior of the system without presuming its implementation.

- The product manager uses the product and ensures it works as specified, without reviewing the code.

- The executive samples control points they understand, checks data slices, and thereby builds aggregate confidence in the overall financial model.

These mechanisms do not represent managerial blindness: they represent the art of verifiable abstraction layers. Every competent manager in the real world already practices this controlled delegation. What is new, for software engineers, is having to apply it to their own craft.

The only current blind spot: technical debt

Let us be honest: there is currently a methodological limitation to vibe coding in production, and that limitation is called technical debt. Unlike functional correctness, stability, and security — all measurable through external tests — technical debt is best measured by reading the code. There is no reliable abstraction metric today that can detect, without expert human inspection, that a vibe-coded module is accumulating design choices that will mortgage future evolutions.

This limitation does not invalidate vibe coding. It constrains the scope of its application.

The leaf-node strategy: a doctrine for decision-makers

The operational response to this limitation can be stated simply: concentrate vibe coding on the leaf nodes of your architecture.

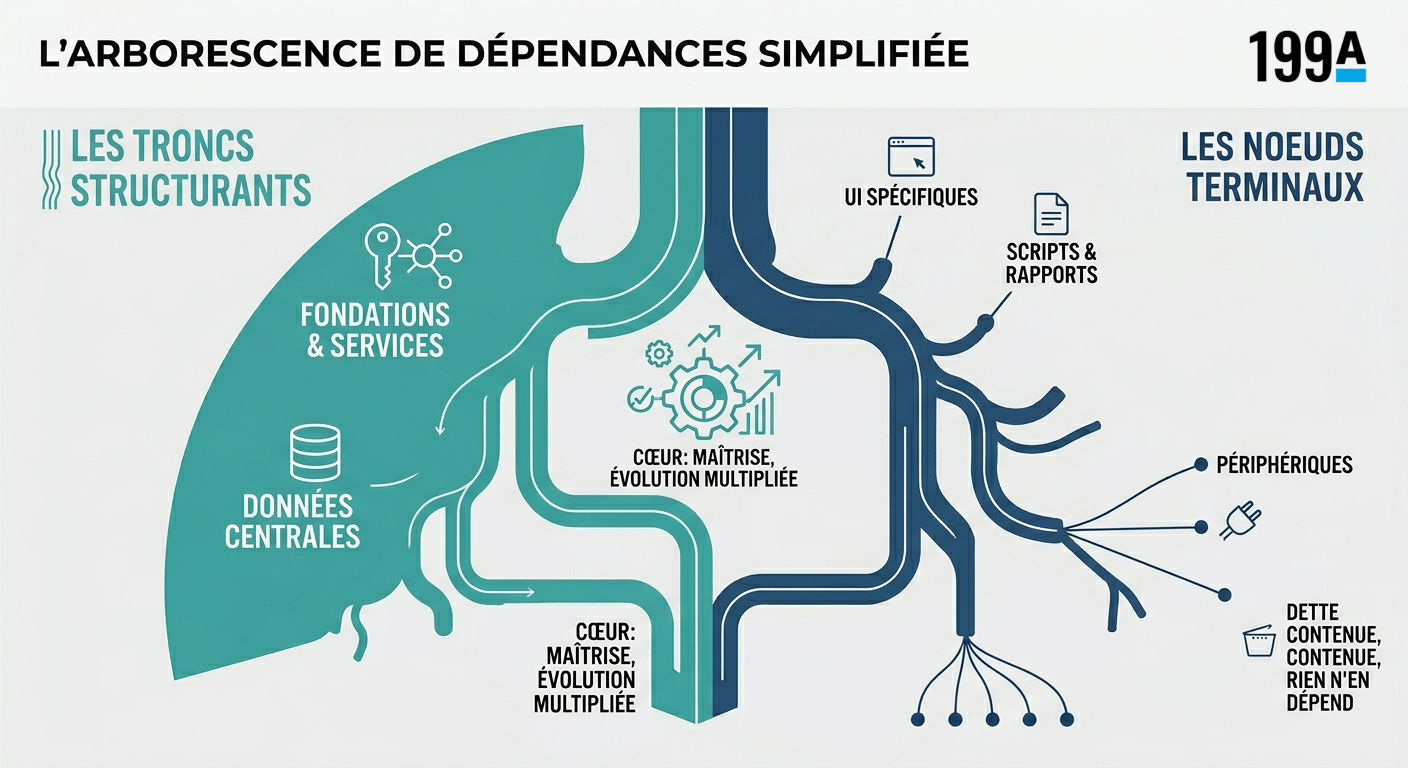

In the dependency tree of a software system, we distinguish:

-

Structural trunks and branches: foundational layers upon which other components rest. Data architecture, authentication systems, service orchestration, shared internal APIs. This is the core that your engineers must continue to master in depth, because these areas evolve, are extended, and any debt there has a multiplying effect.

-

Leaf nodes: terminal features on which nothing depends. Specific user screens, export scripts, ad hoc reports, peripheral integrations, one-shot connectors. If technical debt lodges there, it is contained. These areas change little, serve as no foundation for other code, and any eventual rewrite remains local.

The strategic recommendation is therefore as follows: authorize vibe coding where debt is architecturally confined, prohibit it in the structural core, and invest in an explicit mapping of this boundary. This mapping is now a governance deliverable as important as your target architecture diagram.

Case study: a 22,000-line pull request, merged with confidence

A recent episode illustrates the feasibility of this doctrine at industrial scale. Within Anthropic, a 22,000-line change was merged into a production codebase dedicated to reinforcement learning — code largely written by Claude itself.

How could such an operation be conducted responsibly? Four principles were applied with rigor:

-

Massive upfront human investment. This was not a single prompt followed by a merge. Several days of human work were dedicated to elaborating requirements, iteratively guiding the model, and specifying the target system.

-

Focus on leaf nodes. The bulk of the change touched peripheral areas where it was acceptable to carry some technical debt, because they did not constitute a foundation for other developments.

-

Intensive human review on structural parts. The few components that needed to remain extensible were reviewed line by line by experienced engineers.

-

Explicit design for verifiability. Stress tests were designed upfront, the system was architected around humanly verifiable inputs and outputs, so that control points exist independently of code reading.

The result: confidence in this change equivalent to that obtained for any other change in the codebase, but delivered in a fraction of the time that full manual writing followed by exhaustive review would have required.

Even more interesting for a decision-maker: the knock-on effect on product strategy. When the marginal cost of a feature drops from two weeks to one day, the portfolio calculus changes. Projects previously dismissed as too costly become trivial. You are not simply doing the same things faster: you are doing things you would never have contemplated.

The Claude PM manual: operational methodology

The mantra I recommend to all teams engaged in this transition: "Don't ask what Claude can do for you, ask what you can do for Claude."

When you vibe code, you are no longer an engineer — you are a product manager for an AI. This requires a profound cultural shift.

Context is the new code

The temptation is strong to treat AI like a chatbot: a short request, a quick fix, a feature asked for in three lines. This practice, inherited from early interactions with conversational assistants, is counterproductive as soon as the stakes rise.

Consider: if a new engineer joined your team, would you hand them a feature on day one with a single-sentence brief? Obviously not. You would walk them through the codebase, describe the architectural constraints, internal conventions, non-functional requirements, and known edge cases.

This is exactly what the vibe coder must do with Claude. Fifteen to twenty minutes of context gathering before the execution prompt is a normal investment. This phase often takes the form of a separate prior conversation, during which the AI explores the codebase, identifies the impacted files, and spots the patterns to follow. The deliverable of this phase is a documented plan, which is then injected — either into a new session or as an execution instruction — into the model that will perform the work.

Empirical experience shows that this protocol dramatically increases the success rate of ambitious tasks.

Avoid over-constraining

Paradoxically, although context is crucial, one must guard against over-prescription. Current models deliver their best results when the framework is clear but not confining. On aspects where the how is indifferent, give the model freedom. On aspects where you have a strong architectural preference, be explicit. Think of a competent junior engineer: neither micromanagement nor abandonment.

Test-driven development revisited

Test-driven development (TDD) finds a new lease on life here. But beware of a classic pitfall: left to its own devices, the AI tends to produce tests too tightly coupled to the implementation, which break at the slightest evolution. The remedy is to be prescriptive about form: explicitly requesting three end-to-end tests — one happy path, two error cases — staying at the behavioral level. This minimization makes the tests humanly readable, and often constitutes the only part of the code that the vibe coder actually inspects.

Compaction and context hygiene

In long sessions, coherence degradation (erratic renaming, convention drift) is a real problem. The best practice is to compact the session at natural breakpoints, as a human would before a lunch break. A proven workflow: have Claude produce a documented plan during the exploration phase, compact, then begin execution based on that document. This mechanism reduces a 100,000-token conversation to a few thousand, without losing the essentials.

What products should be built?

For publishers and decision-makers overseeing platforms, a major strategic opportunity is emerging: systems that can be formally proven to be error-free. Frameworks where the sensitive parts — authentication, payment, persistence — are pre-built and locked down, leaving the vibe coder a well-delimited sandbox for their functional layer.

The archetypal example already exists: Claude artifacts, where generated code runs in a restricted frontend environment, without a backend, without secrets, without significant attack surface. But there is an entire market to invent here: BaaS (backend as a service) solutions designed to absorb vibe coding without turning every application into a security sieve. This is probably one of the most promising investment axes for the coming years.

Embracing the exponential: what it really means

The final principle, embrace exponentials, is the most misunderstood. "Models will improve" is a weak reading, almost trivial. The strong reading is far more demanding: models will improve faster than our ability to imagine it.

Let us return to the founding intuition. An engineer from the 1990s with a few kilobytes of RAM would have had the greatest difficulty imagining a world where personal machines host terabytes. This is not a factor of two or four: it is a factor of one million. That is what twenty years of exponential growth produces.

The right strategic question is therefore not "what will we do if models are twice as good in two years?" — that question is too small. The right question is: "what organization, what processes, what architecture will we have built to remain relevant in the face of models whose capabilities will have been multiplied by orders of magnitude?"

Recommendations for decision-makers

For the technical directors, chief information officers, and product directors who will read this article, here are the priority action areas:

1. Explicitly map your leaf nodes. Produce a governance document that distinguishes the intangible architectural core from the peripheral zones eligible for vibe coding. Make it a living deliverable, revised each quarter.

2. Invest in verifiability. Automated security audits, stress tests, validation harnesses independent of the code: these artifacts become the true line of defense when human reading is no longer economically practicable. These are the new internal controls of your system.

3. Train your teams in the product manager posture. The software engineering profession is shifting toward that of agent pilot. This transition requires work: writing specifications, designing test protocols, the art of contextualized prompting.

4. Build environments that are secure by construction. Whether you are a user or producer of platforms, the future belongs to environments where vibe coder errors are structurally contained.

5. Prohibit vibe coding in the structural core, today. Prudence demands a clear boundary. Models will improve and the boundary will recede, but at every moment you must know where it stands.

6. Prepare for the shift. Those who, in two years, still require their teams to review every line produced by AI will find themselves in a position of structural competitive disadvantage. Not because they will be wrong in principle, but because their organization will have become the bottleneck of its own productivity.

Outro

Vibe coding in production is not a geek's whim in search of novelty. It is the pragmatic response, still in its early stages, to a question that will impose itself on the entire software industry within the next eighteen months: how do we productively leverage systems whose production speed exceeds our human capacity for line-by-line verification?

The answer lies neither in refusing the tool nor in embracing it without guardrails. It lies in reinvesting, in our craft, the methods that humanity has tested for centuries across all disciplines where one must manage expertise one does not personally master: rigorous specification, verification by abstraction, architectural confinement, targeted sampling.

Decision-makers who understand this transition today, who put in place appropriate governance mechanisms, and who train their teams in the AI product manager posture, are building a lasting competitive advantage. The others will learn the hard way that in an exponential regime, falling behind is difficult to recover from.

Forget that code exists, but never that the product exists. This phrase, properly understood, is the compass for the years ahead.