The Noise of Bots

When machines march their armies through our minds

There was a time when danger could be recognized by its sound. The noise of boots on pavement, rhythmic, metallic, threatening, signaled the approach of an ideology ready to crush everything that stood in its way.

That noise, we learned to recognize it in history books, in black and white archives, in the testimonies of those who had the misfortune to witness it firsthand.

Today, the threat no longer echoes on the pavement.

It pulses in our news feeds, it rumbles in our notifications, it slips between two posts from loved ones to deposit, silently, the seeds of a thought that should never have crossed the gates of collective reason.

The noise of bots. Imperceptible. Omnipresent. Devastating.

A silent infestation

Let's start with the facts, since this is precisely what public debate is being deprived of.

According to the 2024 Imperva Bad Bot Report, bots, automated entities masquerading as human users, now represent 49.6% of all global internet traffic. This is the first time in the history of this annual observatory that non-human traffic officially exceeds human traffic. In other words: for the first time, the web speaks more to machines than to people.

Let this figure sink in. Take the time to measure its quiet obscenity.

On social platforms, the picture is equally striking. A study by the Pew Research Center published as early as 2018 revealed that 66% of links to the most popular news sites on Twitter were shared by automated accounts, not by humans. In 2023, researchers from the Oxford Internet Institute estimated that between 9 and 15% of active accounts on major social platforms are bots, with some sectoral analyses reaching up to 20%. Elon Musk himself, before buying Twitter to make it the laboratory of this disintegration, had waved this figure of 20% fake accounts as a negotiation argument, before accommodating it perfectly once in command.

These are not abstract statistics. These are the invisible architectures that structure what you believe to be public opinion.

The algorithm, willing accomplice

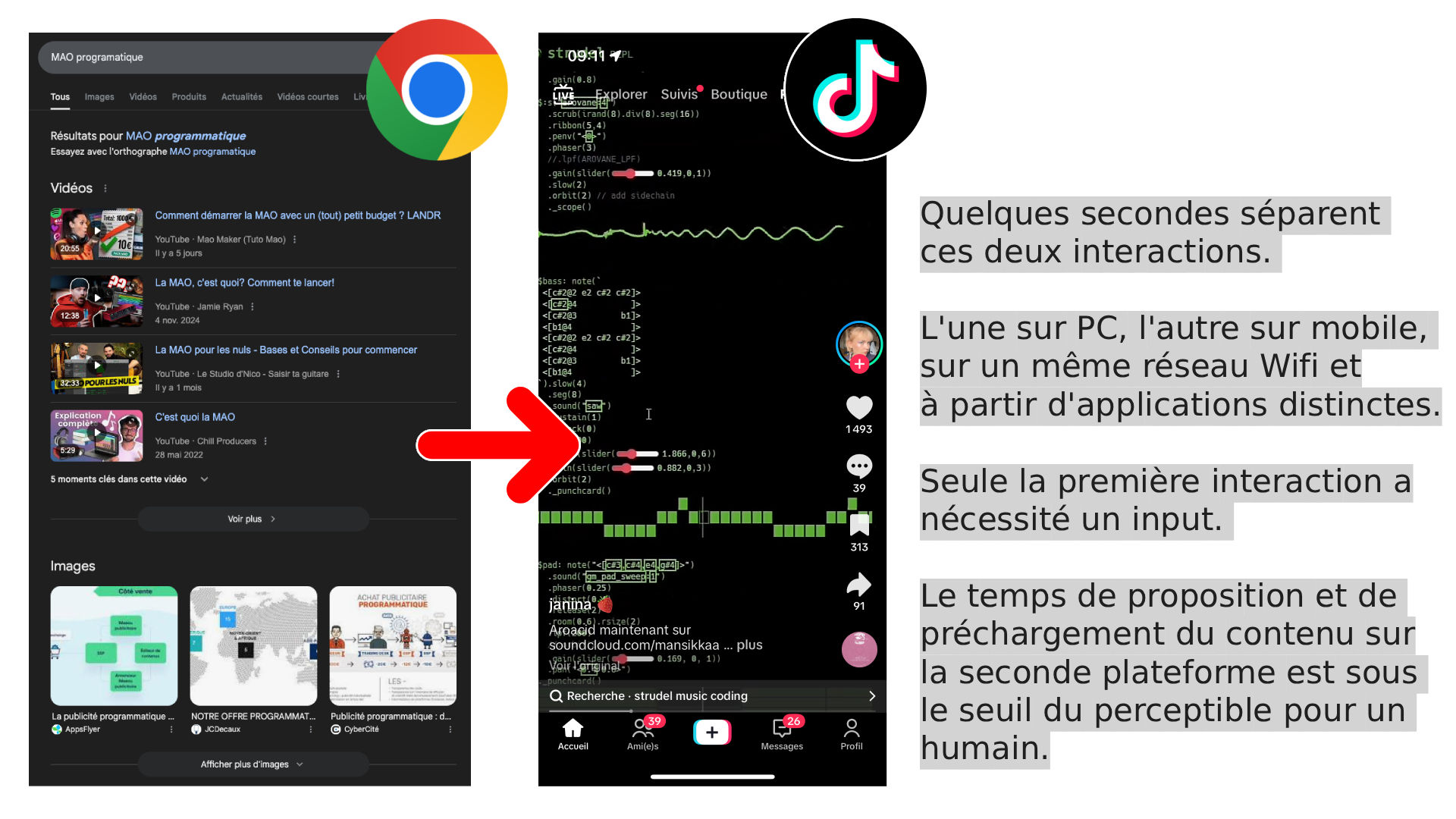

But bots alone would not be enough to explain the scale of the disaster. For an army of robots to be effective, it needs favorable terrain. And this terrain, the platforms prepare it with surgical precision.

Recommendation algorithms, these optimization systems that decide what you see, in what order, with what frequency, are not neutral arbiters. They are instruments for maximizing engagement, the sacred metric on which the stock market valuation of each platform depends. However, engagement is not reflection. Engagement, in the language of clicks, is anger, fear, indignation, scandal. Primary emotions that short-circuit analysis and short-circuit reason.

An internal Meta study, revealed in 2021 by whistleblower Frances Haugen, demonstrates that the company knew perfectly well that its algorithms amplify hateful and disinformation content, because this content generates more interactions than informative or nuanced content. The conclusion of the internal study was unequivocal.

Meta's decision? Change nothing essential.

The result of this equation (bots generating extreme content, algorithms amplifying emotionally charged discourse) is an echo chamber on a civilizational scale. Legitimate voices, those that nuance, contextualize, question, self-correct, are systematically penalized by mechanics designed to reward radicalism and simplicity.

In the digital ecosystem, Darwin has been reprogrammed: what survives is not the most adapted, it's the most outrageous.

The hate factory on an industrial scale

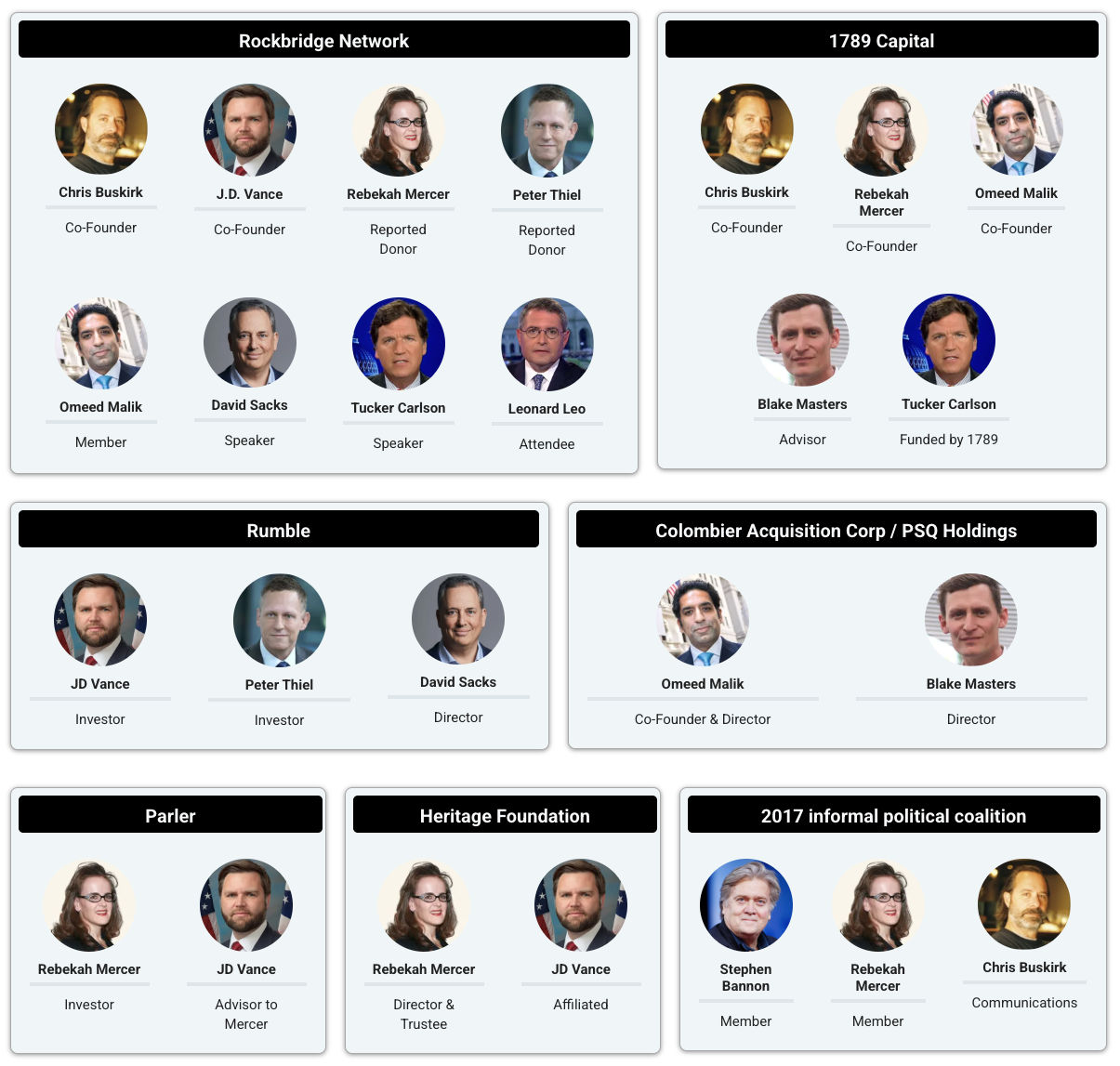

Let's not hide from the truth: this technical infrastructure is not the result of chance. It is, to a large extent, the product of deliberate intentions, whether industrial, political or state.

Coordinated influence operations based on bots have been documented during every major electoral scrutiny of the last ten years: 2016 US presidential election, Brexit, Brazilian, French, Italian, Indian elections. The European Union's Disinformation Research Unit (DISINFO LAB), like the Stanford Internet Observatory (closed by the Trump administration in 2024…), have mapped entire networks of automated accounts deployed to amplify nationalist, xenophobic and anti-democratic narratives. Russia, China, Iran, but also private actors working for domestic clients have industrialized this practice.

What was once a costly psychological operation, reserved for states and major powers, has become accessible for a few hundred dollars on digital black markets. Buying ten thousand fake followers, launching a coordinated harassment campaign against a journalist, making a conspiracy hashtag "trend" — all this can now be ordered like a pizza.

And meanwhile, traditional media watch.

The guilty silence of information guardians

Let's talk about traditional media. In all intellectual honesty, they should be put on trial as they deserve.

Faced with this massive infestation of the informational space, what has been the response of major newsrooms? Essentially: the race for clicks, the reproduction of viral trends, the recycling of controversies manufactured by bots into "real societal debates". How many times have we seen journalists from continuous news channels "cover" a hashtag as if it represented the opinion of a significant segment of the population, without ever verifying the origin of the accounts that animated it? How many "controversies" have been elevated to the rank of major social facts because a network of automated accounts had artificially propelled them into the public sphere?

The media, instead of being the critical filter between reality and perception, have become involuntary amplifiers — or sometimes very deliberate ones — of the noise of bots. Worse: in their quest for audiences and advertising revenue, some have adopted the same logics as the platforms, rewarding emotion over analysis, conflict over nuance, simplification over complexity. There is no conspiracy in this observation. There is something more banal and more frightening: the progressive capitulation to the laws of the attention market.

Polarization, symptom of reason in retreat

What this infernal mechanism produces is no longer just a problem of "information quality". It is a deep democratic pathology.

Political polarization in Western democracies has reached unprecedented levels since World War II. Longitudinal studies conducted by the Pew Research Center in the United States show that the ideological distance between Democrats and Republicans has more than doubled in thirty years — and this acceleration coincides precisely with the rise of algorithmic social networks. In Europe, the progression of far-right parties in countries that seemed immunized — Sweden, Finland, Netherlands, Germany — follows a curve similar to that of platform penetration and their logics of radicalization through engagement.

This is not a coincidence. It is a causality that academic research documents with increasing precision. The work of Renée DiResta, Kate Starbird or Yochai Benkler has established the mechanisms by which an active minority, boosted by automated tools, can impose its representations on a silent majority, creating the illusion of extremist consensus where there exists only artificial noise.

We manufacture fascisms on demand, and we serve them in continuous flow.

The noise of bots is this: not the frank and assumed brutality of brown shirts marching on pavement, but soft infiltration, progressive contamination, normalization through repetition. When a conspiracy or nationalist narrative is hammered a hundred times a day from a thousand different accounts, the human brain, that formidable organ shaped by evolution to detect patterns and adapt to its social environment, ends up integrating it as real data. This is what neuroscience calls the illusory truth effect: repetition creates credibility, independent of any factual foundation.

Education: the only dam against collapse

There is an exit from this labyrinth. It is uncomfortable because it is slow, demanding, and generates no short-term profit. It is called education.

Not education as it is often practiced (knowledge transmission, preparation for employability, formatting to skills) but an education resolutely oriented toward judgment formation. An education that teaches to question a source before sharing it, to distinguish verified information from emotional assertion, to spot manipulation mechanics, to tolerate uncertainty rather than take refuge in the comfortable certainty of the camp.

An education in media and information (what English speakers call media literacy and Finns have practiced since the 1990s with measurable results on resistance to disinformation) should be the first budget item of any democracy that cares about its survival. Instead, we treat it as an optional pedagogical option, squeezed between two hours of exam preparation and a civic education course dispatched in twenty minutes.

We pour more computing power, algorithmic ingenuity and private capital into systems that we know perfectly well weaken democracy every day, and we make critical thinking a budgetary adjustment variable. This is a form of slow-release collective suicide.

Without conscience, all this science leads us to ruin. This is not a romantic metaphor. This is a diagnosis. The most sophisticated information dissemination tool ever built by humanity is being used to make it swallow its own most archaic demons — nationalism, hatred of the other, cult of the leader, contempt for truth — at a speed and scale that Goebbels nor his contemporaries could never have imagined.

Hearing the noise before it's too late

History, when it wanted to show itself indulgent, sometimes warned. The noise of boots on pavement could be heard before tanks crossed borders. Some heard it. Too few. Too late.

Today, the noise of bots is everywhere. It is in your phone, in the trends you check in the morning, in the comment threads you browse before sleeping. It shapes you without asking your opinion, it shrinks your cognitive horizon, it normalizes the unacceptable through the simple mechanics of industrial repetition.

The question is not whether we can silence it. We cannot, not entirely, not without sacrificing fundamental freedoms. The question is whether we are still capable of hearing it for what it is: not the voice of the people, not the authentic pulse of a society, but the artificial noise of machinery built to divide us, radicalize us, and ultimately subjugate us.

Answering this question requires citizens capable of critical analysis, media capable of honesty, regulators capable of courage, and educators capable of transmitting something other than skills: an intellectual posture, a reflex of lucidity.

The alternative, we know it. It has a recognizable cadence. It resonates, in another tonality, but with the same terror at the end.