Learning continuity in the age of AI

How to escape brain atrophy when we need it most

Artificial intelligence, by offering an instant response to every question, silently short-circuits the cognitive mechanisms that make learning not a result, but a process; effort, frustration, memory reactivation, the slow construction of lasting mental schemas. What we gain in fluidity, we risk losing in depth: and it is precisely at the moment when the complexity of the world demands more agile, more critical and more autonomous minds, that we are tempted to delegate our intelligence to a machine.

AI, one-way mirror of our intelligence

In 2023, a law student at a major American university submitted a sixty-page thesis entirely written by a generative language model. His professors detected nothing unusual. Neither in the structure, nor in the arguments, nor in the style. Everything was coherent, documented, convincing. What was missing, however, was invisible: no trace of the intellectual effort that transforms information into knowledge, data into understanding, an answer into judgment. The student had obtained a grade. He had learned nothing.

Artificial intelligence is an inescapable trap: it reflects all accumulated human knowledge, but conceals from those who consult it the image of their own thinking as it fades away. On one side, it represents one of the most spectacular amplifications of access to knowledge since the invention of printing, instantaneous, personalized, available in all languages and at all hours. On the other, it silently short-circuits the cognitive mechanisms that make learning not a result, but a process: effort, frustration, memory reactivation, the progressive construction of mental schemas.

This tension is not anecdotal. It defines one of the deepest educational challenges of our time. To understand it, we must go back to the long history of cognitive revolutions, examine what neuroscience tells us about the learning brain, measure the risks specific to the era of the algorithmic oracle, and finally propose concrete ways not to sacrifice human intelligence on the altar of digital convenience.

Five interdependent axes compose this mapping of the issues.

A concern as old as progress

The history of education is marked by technological revolutions, and each of them has triggered the same fundamental anxieties. Socrates, as reported by Plato in the Phaedrus, feared that writing would weaken memory and instill in minds an appearance of knowledge rather than true knowledge. He was not wrong about the diagnosis, but wrong about the conclusion: writing did not make humanity stupid, it reconfigured its learning modalities. The Greek paideia (educational and cultural ideal of ancient Greece), based on dialectics and the effort of thought, valued precisely what writing threatened to dilute: the living confrontation with uncertainty.

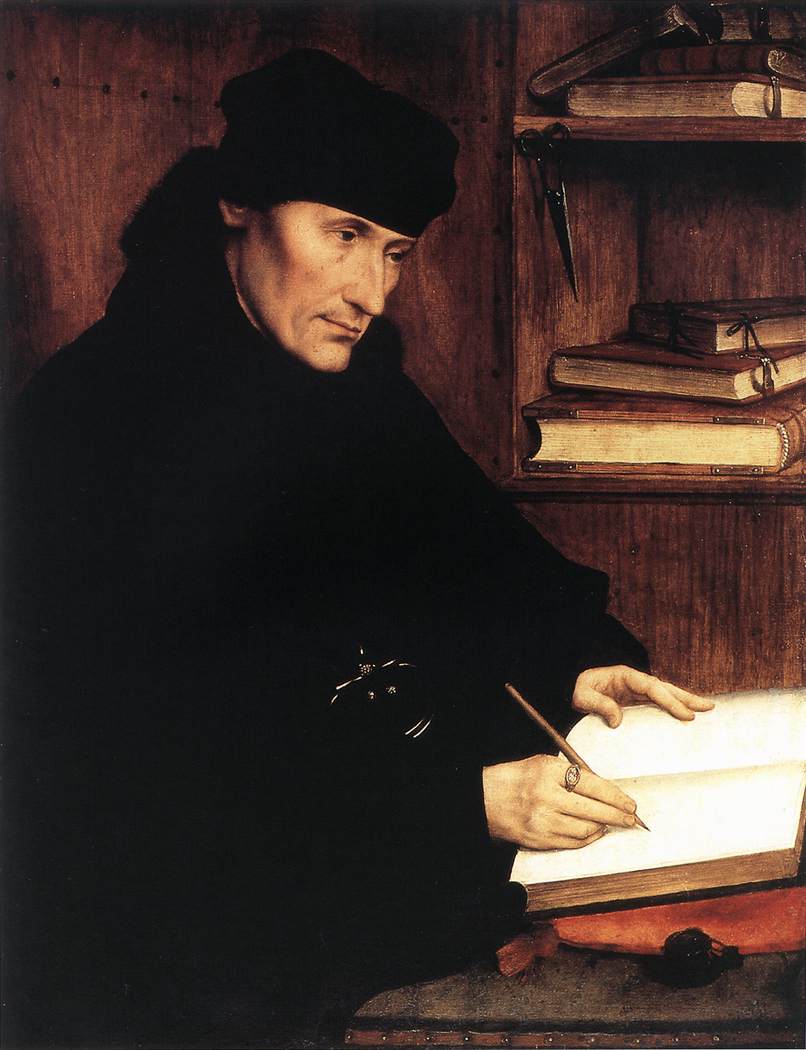

In the Middle Ages, copyist monks embodied another figure of learning through effort. Reproducing a text by hand was not a mechanical task but a total memory practice, an immersion in the very structure of another's thought. When Gutenberg revolutionized the dissemination of ideas, Erasmus was enthusiastic, but others perceived in the multiplication of books a threat to the formation of critical thinking: why bother thinking for oneself if everything is already written?

Erasmus, by Quentin Metsys, 1517.

The 18th and 19th centuries saw the birth of republican schooling, carried by Condorcet and his dream of equality through knowledge. But Rousseau had already warned against purely transmissive instruction, the kind that fills minds without forming them. Dewey, at the turn of the 20th century, theorized what we would today call experiential learning: learning means doing, fumbling, starting over. Then came radio, television, and with them McLuhan and Postman, who warned about the superficiality of a culture based on passive consumption of images and sounds. Each era has had its prophets of cognitive decline, and each era is partially right.

AI is not an absolute break. It is the latest act in a millennial dialectic between the tool and the intelligence that uses it. But its intensity, speed and ubiquity give it an unprecedented dimension. It is not content to disseminate knowledge: it claims to produce it, synthesize it, and now, pedagogically embody it. How can we not see in this the fusion point of the medium with not only the message but also its recipient? This is where the challenge changes in nature.

Effort versus fluidity: what we lose by saving time

For millennia, learning methods have been slow, costly and often painful. It is precisely this slowness that guaranteed their effectiveness. Spaced repetition, codified by Hermann Ebbinghaus from the end of the 19th century, rests on a simple principle: it is the regular return to information, at increasing intervals, that durably anchors a memory in long-term memory. Socrates' maieutic method was based on the same logic: forcing the student to give birth to knowledge himself, by placing him face to face with his own contradictions. These processes are cognitively costly. And it is precisely their cost that makes them effective.

New digital approaches have optimized access, but sometimes at the expense of depth. Platforms like Khanmigo or Duolingo Max offer remarkable pedagogical personalization, adapting pace, level and format to each learner's needs. Gamification captures attention and generates engagement, at least initially. Micro-learning allows learning to be integrated into the interstices of daily life. These innovations are real and valuable.

But they have a blind spot: they favor extrinsic motivation (reward, badge, score) at the expense of intrinsic motivation, which alone enables lasting and self-directed learning. Kahneman (whom Yann Le Cun now readily quotes during his conferences), in his distinction between System 1 (fast, automatic thinking) and System 2 (slow, deliberative thinking), offers us a valuable analytical framework: digital tools designed for fluidity almost exclusively activate System 1. Yet it is System 2, laborious, uncomfortable, resistant, that builds deep understanding.

The synthesis is not to reject new methods, but to recognize that they require conscious pedagogical governance. AI optimizes access to knowledge, but it does not replace the slow and costly mechanisms that forge human intelligence: effort, frustration, and time.

What the brain loses when a machine thinks in its place

Neuroscience today offers striking insight into the effects of cognitive externalization. The principle of brain plasticity, formalized by Donald Hebb, stipulates that neural connections are strengthened by repeated use and weakened as soon as they are no longer solicited. The brain literally reconfigures itself according to the tasks we entrust to it or spare it from. When a machine thinks in our place, it is not just seconds of reflection that we save: it is neural circuits that we let atrophy.

Betsy Sparrow and her colleagues demonstrated in 2011 what they called the "Google effect": when we know that information is immediately accessible online, our brain invests less effort to memorize it. The hippocampus, seat of long-term memory, is less solicited. The prefrontal cortex, which governs abstract reasoning and decision-making, is bypassed. We no longer store knowledge, but the address where to find it. Stanislas Dehaene, in his work on learning, emphasizes that deep reading, manual writing and complex problem solving activate extensive and diffuse neural networks, exactly those that delegated use of AI tends to underutilize.

Meta-cognition, this ability to think about one's own thinking, theorized by Flavell, perhaps constitutes the most silent victim of this delegation. A student who uses a language model to write an essay does not activate the same neural networks as one who reads, synthesizes, hesitates, reformulates and writes. The first stores answers; the second builds mental schemas. Vygotsky reminds us that cognitive development operates in the proximal zone, that uncomfortable space between what one already knows how to do and what one does not yet know how to do alone. Placing an AI in this space means eliminating precisely the place where the brain grows.

The algorithmic oracle and the illusion of total knowledge

Beyond individual mechanisms, AI generates an epistemological problem of unprecedented scope: it gives answers. Not questions. Yet, the heart of intellectual formation, from Socrates to Popper, lies precisely in the art of doubting, formulating hypotheses, accepting uncertainty as a condition of living thought.

When a future doctor delegates to an algorithmic system the identification of a differential diagnosis, he may acquire a correct answer. But he does not develop the clinical reasoning that will allow him to manage atypical cases, contradictory symptoms, patients who do not correspond to training models. He also does not develop what Michael Polanyi called tacit knowledge, that embodied, non-formalizable intelligence, which is acquired through repeated experience, failure, and human relationship. A doctor trained on the algorithmic oracle will know how to diagnose, but will be less inclined to listening and anticipation.

There is a third, more structural risk: large language models reproduce and amplify the statistical biases of their training data. Harari, in his reflection on Homo Deus, anticipates a humanity that progressively delegates its decisions to opaque systems. Cathy O'Neil, for her part, has documented how algorithms can institutionalize inequalities under a veneer of mathematical objectivity. AI does not teach us what we have not already known to teach it and by standardizing knowledge, it risks impoverishing the epistemic diversity that constitutes the true richness of human thought.

Relearning how to learn in the age of machines

Faced with these risks, the temptation of radical rejection is understandable but sterile. AI is here, and its benefits are real. The question is not to choose between it and human intelligence, but to define the conditions for an emancipatory cohabitation.

For students, this implies adopting what could be called the rule of three Es: Effort as a default posture, Error as a constitutive stage of knowledge, Exploration as a refusal of prefabricated answers. Concretely, this translates into resorting to active memorization techniques, mind mapping, Feynman's principle of mutual teaching, spaced reactivation and a deliberately limited use of AI for tasks that solicit critical thinking.

For teachers, the priority is pedagogical before being technological: learning to question AI rather than obeying it, building hybrid sequences where the digital tool manages access to information while the human assumes synthesis, debate, judgment. The teacher-student relationship, in its affective and improvisational dimension, remains irreplaceable. It is in this relationship that the transmission of tacit knowledge occurs.

For institutions, reforming evaluation methods is urgent. Less memorized restitution, more transversal projects, open situational exercises, problems without unique solutions. Training on AI's limits, its biases, its hallucinations, its inability to generate real questions, must become a fundamental competency like reading or arithmetic. We are still very far from this, although signs of good will seem to be emerging.

An intelligence to defend

AI is a knowledge accelerator, but without effort, it becomes a brake on intelligence. This paradox is not a fatality: it is a challenge of cognitive, pedagogical and institutional governance.

In the optimistic scenario, AI becomes what the best technologies have always been: an amplifier of human capabilities, freeing the mind from mechanical tasks to allow it to rise toward creation, nuance and empathy. In the pessimistic scenario, it becomes the substitute for thought for generations of learners who will never have developed the cognitive muscles necessary to contest its answers.

The choice between these two scenarios is not technological. It is profoundly human. It depends on the collective will to maintain effort at the heart of education, to value deliberative slowness in a culture of the instantaneous, and to resist the illusion that convenience is synonymous with progress.

The ultimate challenge is this: how to make AI a tool of emancipation, and not dependence? The answer is neither in algorithms, nor in public policies alone, it is in our brains, and in the conscious decision to continue exercising them.

Appendix — Sources and references

Neuroscience and cognition

- Dehaene, S. (2018). Apprendre ! Les talents du cerveau, le défi des machines. Odile Jacob.

- Kahneman, D. (2011). Thinking, Fast and Slow. Farrar, Straus and Giroux.

- Sparrow, B., Liu, J., & Wegner, D. M. (2011). Google Effects on Memory: Cognitive

- Consequences of Having Information at Our Fingertips. Science, 333(6043), 776–778. https://doi.org/10.1126/science.1207745

- Hebb, D. O. (1949). The Organization of Behavior. Wiley.

- Ebbinghaus, H. (1885). Über das Gedächtnis. Duncker & Humblot.

Educational sciences

- Vygotski, L. S. (1934/1985). Pensée et Langage. Éditions Sociales.

- Dewey, J. (1897/1990). The School and Society. University of Chicago Press. Flavell, J. H. (1979). Metacognition and Cognitive Monitoring. American Psychologist, 34(10), 906–911.

- Piaget, J. (1970). L'Épistémologie génétique. Presses Universitaires de France.

AI, society and epistemology

- O'Neil, C. (2016). Weapons of Math Destruction. Crown Publishers.

- Polanyi, M. (1966). The Tacit Dimension. Doubleday.

- Postman, N. (1985). Amusing Ourselves to Death. Viking Penguin.

- McLuhan, M. (1964). Understanding Media: The Extensions of Man. McGraw-Hill.

Historical and philosophical references

- Plato. Phaedrus (trans. L. Robin). Gallimard, coll. Pléiade.

- Condorcet, N. de (1791/1994). Cinq mémoires sur l'instruction publique. Flammarion.

- Erasmus, D. (1511). The Praise of Folly. (reference edition: Flammarion, 2011).