For three decades, Purdue's reference architecture, formalized under the ISA-95 designation and etched in stone in industrial specifications worldwide, has constituted the invisible backbone of global manufacturing industry. It has structured the separation of responsibilities, organized data flows, defined security perimeters, and given industrial CIOs a common language to design their information systems. For a long time, it was an elegant response to a real problem.

That time is over.

Not because the model was bad—it was, in its context, remarkably well designed. But because the foundations on which it rested—tolerable latency, upstream data, human decision-making, the clear separation between the operational world and the informational world—are precisely those that artificial intelligence is pulverizing, layer by layer, with a method that no one had anticipated at this speed or scale.

What Purdue solved, and why this solution became a problem

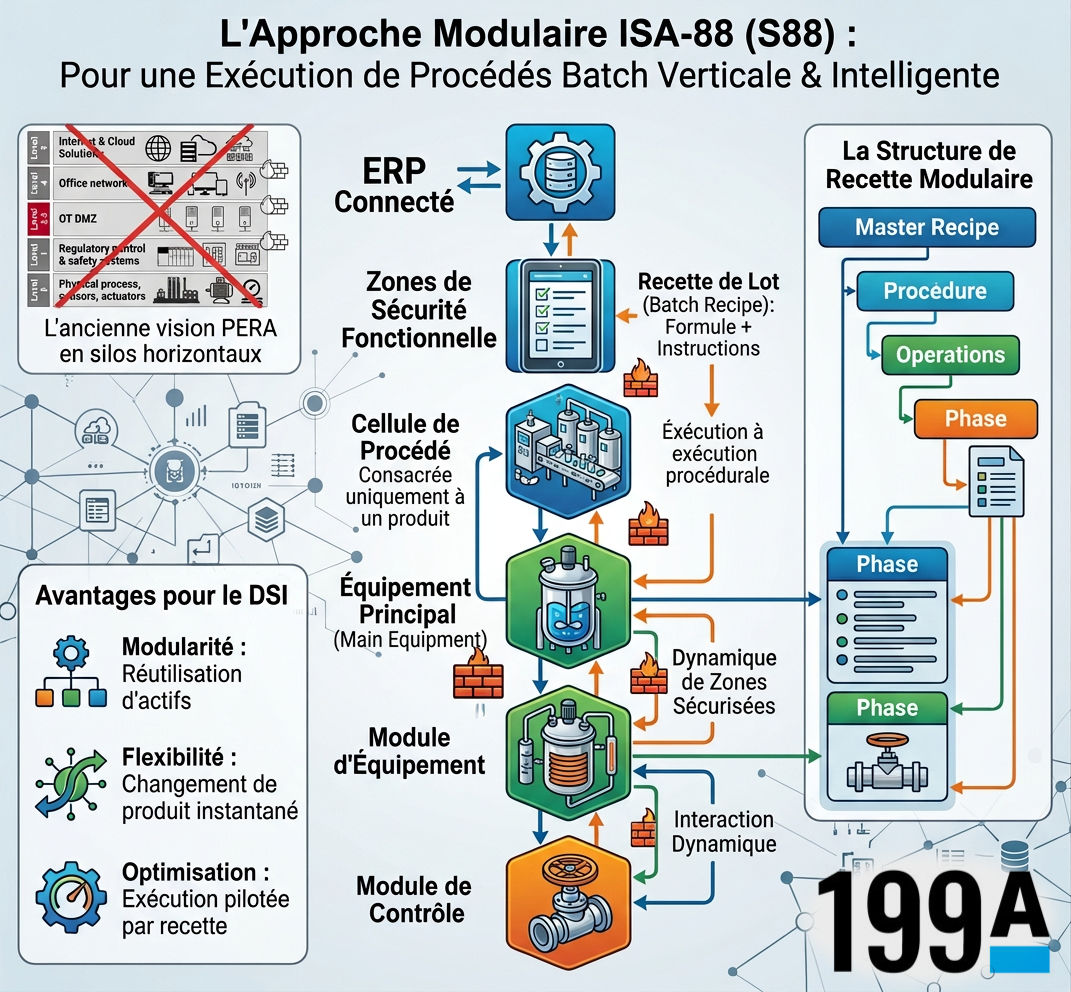

Theodore Williams, when he laid the foundations of the PERA model at Purdue University in the 1990s, was responding to a concrete problem: how to integrate heterogeneous industrial systems into a coherent, secure and governable architecture? The answer—a five-level pyramid ranging from the physical sensor to the enterprise information system—was almost pedagogically clear. Level zero houses sensors and actuators. Level one, programmable logic controllers. Level two, SCADA and supervisory systems. Level three, MES systems that orchestrate production execution. Level four, finally, ERP and planning systems that speak the language of business.

The beauty of this model lay in its segregation logic. Each layer communicates with its immediate neighbors, rarely beyond. Information rises slowly, enriches at each level, and reaches management in an aggregated, normalized form, ready for decision-making analysis. The air gap between OT layers and IT layers was not an architectural flaw but a deliberate security feature. A controller piloting a furnace at 1,400°C has, in this logic, no reason to be exposed to the company's IT network.

This logic was perfectly adapted to a world where data was rare, slow, and expensive to process. It is catastrophically unsuited to a world where data is abundant, instantaneous, and where inference capacity can now reside in a credit card-sized component installed directly on the production line.

AI as architectural solvent

What fundamentally changes with industrial AI in 2026 is not so much the available computing power—although this is staggering—as the localization of intelligence. For decades, analysis lived at the top of the pyramid, in data centers, in Business Intelligence tools, in the brains of analysts who worked at temporal and spatial distance from production. Actuation, action on the process, lived at the bottom of the pyramid: fast and dumb.

AI reverses this topography. Embedded inference models, which we now deploy directly on new-generation PLCs, on edge gateways or in real-time digital twins, are capable of analyzing, deciding and actuating without ever sending data up to higher levels. An edge-deployed vibration anomaly detection model on an industrial compressor doesn't consult the MES before triggering a preventive slowdown. It acts, in milliseconds, based on patterns learned during months of training. Data is no longer upstream. It is local, processed, actuated and often consumed on-site.

This reality creates what could be called an architectural short-circuit. In the PERA model, a process adjustment decision was the result of a long loop: sensor, controller, SCADA, MES, human analysis, downward setpoint. This loop could take hours, sometimes days. An embedded AI system short-circuits this entire chain. This is not an optimization of the existing model. It is its functional negation.

At a major European automotive equipment manufacturer, implementing an artificial vision system coupled with a predictive quality control model made two entire levels of the Purdue pyramid structurally useless for the concerned perimeter. The sensor sees, the model judges, the actuator reacts. The MES learns the result after the fact, for regulatory traceability needs. The pyramid hasn't disappeared, but it has changed nature: from decision-making architecture, it has become compliance architecture.

The silent disintegration of the responsibility layer

Where the PERA model's impact was deepest, and where its erasure is most dangerous, is in its organizational dimension. The pyramid wasn't just a technical diagram. It was an implicit organizational chart. Each layer had its managers, its skills, its tools, its metrics. The process control operator was sovereign over levels 1 and 2. The production manager reigned over level 3. The CIO and his teams governed levels 4 and 5. The OT/IT boundary was also a boundary of profession, culture, sometimes collective agreement.

AI dissolves this geography of responsibilities with a brutality that few industrial organizations have had time to anticipate. Who is responsible for a decision made autonomously by an edge-deployed model? The OT engineer who integrated the sensor? The data scientist who trained the model? The CIO who validated the deployment architecture? The production manager who signed the solution purchase order? In the PERA model, this question rarely arose because the decision was always, ultimately, human. A human saw the alert, a human made the decision, a human assumed responsibility.

In the emerging architecture, the decision may have occurred and been actuated before a single human had knowledge of the situation. This isn't science fiction. This is what's happening today in continuous processes, in chemistry, in energy, in steelmaking. And this is what will intensify exponentially in the next eighteen months with the generalization of industrial autonomous agents—systems capable not only of analyzing and acting, but of planning action sequences over longer time horizons.

For an industrial CIO, ignoring this question is professional misconduct. Building it technically without treating it organizationally and legally is a strategic fault.

The Unified Namespace as metaphor for a new paradigm

Faced with the pyramid's explosion, an alternative architecture has imposed itself in conversations among the most advanced system architects: the Unified Namespace, or UNS. The concept, popularized notably by Walker Reynolds and widely adopted in process industries most mature in advanced digital solution integration, radically breaks with PERA's ascending logic. Rather than a hierarchical structure where data rises layer by layer, UNS proposes a centralized and contextual data space to which all systems—sensors, controllers, MES, ERP, AI models, operator interfaces—connect directly, in publication or subscription.

This model, often implemented via MQTT brokers or industrial data orchestration platforms, presents a fundamental architectural virtue: it makes the notion of layer disappear in favor of the notion of context. Data no longer belongs to a hierarchical level. It belongs to a physical asset, enriched with its operational context, accessible to any authorized consumer, whether human or algorithmic.

For the CIO, UNS is not a turnkey solution. It's a design philosophy that implies completely rethinking industrial data governance, OT/IT access security (which can no longer be separated by a physical air gap when all actors consume the same source), and asset modeling in standardized ontologies, which frameworks like ISA-88 for batch equipment or Industry 4.0 Asset Administration Shell models are beginning to formalize.

The industrial CIO in 2026

The response to PERA's obsolescence is not to destroy it thoughtlessly. In many industrial environments—high-risk industries, environments subject to strict regulations like nuclear, pharmaceutical or certified agri-food—network segregation and upstream traceability remain non-negotiable regulatory requirements. Purdue, in these contexts, survives, not as a global architectural paradigm, but as guarantor of minimal compliance.

What the CIO must build is a hybrid and stratified architecture, capable of coexisting with inherited regulatory constraints while integrating new AI actuation paradigms. Concretely, this means accepting that different parts of the industrial architecture evolve at different speeds, with clearly defined perimeters where AI decision-making autonomy is authorized, documented and audited.

This also means investing massively in what has become the real competitive differentiator of the coming years: not sensors, not AI models themselves, but the quality and governance of raw industrial data. The world's most sophisticated AI models are unusable if the data they rely on is dirty, poorly contextualized, without reliable history. Manufacturing industry suffers massively from this problem, and this is precisely where the CIO has his most critical role to play, not as a buyer of AI solutions, but as guardian of the enterprise's informational integrity.

Finally, and this is perhaps the most underestimated challenge, the industrial CIO of 2026 must pilot the redefinition of human roles in the decision chain. The production analyst who spent his days interpreting SCADA dashboards doesn't have the same value as five years ago. His residual value, and it is real, lies in his ability to supervise, challenge and correct autonomous systems, to maintain contextual expertise that AI cannot yet build alone, to be the interface between algorithmic rationality and the human complexity of the organization. Training these people, redefining their job descriptions, building AI system supervision tools: this is where the success or failure of the most ambitious industrial AI projects is decided.

The insurance dimension: the bottleneck no one sees coming

There exists a dimension of industrial AI deployment that CIOs have, at this stage, almost never included on their agenda. It's not technical. It's not organizational. It's economic and legal, and it constitutes, in all likelihood, the main limiting factor of the next decade.

The white paper "Delegated Decision Assurance" published in February 2026 by 199A Consulting poses this diagnosis with precision that deserves to be taken seriously by any industrial decision-maker: "The limiting factor of the autonomous revolution is not technical. It is economic and legal. It lies in the absence of an infrastructure capable of absorbing, distributing and pricing the responsibility of decisions made by non-human agents."

The mechanism is simple, and it applies directly to industry. When an embedded AI agent makes an autonomous maintenance decision that leads to an unplanned production line shutdown, or worse, a safety incident, the legal responsibility chain explodes into fragments impossible to reassemble. The document identifies what it calls a "triple fracture": the triggering event becomes diffuse (who, among the base model designer, the integrator, the operator, is responsible?), the causal link becomes opaque (a deep neural network doesn't produce traceable linear reasoning), and attribution becomes problematic (each actor in the chain has solid arguments to deflect responsibility). This triple fracture is not a marginal difficulty—it constitutes, according to the authors, "a structural blockage that prevents the economy from crossing the threshold of total and authentic automation."

The practical consequences for an industrial CIO are immediate. Industrial insurance companies are beginning to ask precise questions about the decision perimeters of AI systems deployed in production. Supply contracts integrate increasingly complex clauses on automated decision responsibility. Boards of directors, personally exposed in case of damage caused by an autonomous agent, exert growing pressure to maintain human supervision that, precisely, cancels much of automation's benefit. The 199A report aptly names this dynamic: "Fiduciary prudence naturally leads to slowing, even blocking, deployment."

The response to this blockage, which the document calls a "Delegated Decision Assurance" infrastructure, is a set of technical, contractual, actuarial and regulatory mechanisms by which an identified entity accepts to guarantee decisions made by an autonomous agent, in exchange for compensation proportional to the assumed risk. It's not yet mature. But its necessity is now unavoidable, and the first forms are already emerging in the financial and energy sectors. For the industrial CIO, integrating this dimension from AI architecture design, by building the decision encapsulation layers, behavioral monitoring and explainable auditing that this infrastructure requires, means not finding yourself tomorrow facing a regulatory or insurance impasse after investing several million in deployments that no one can legally cover. Technology, as 199A reminds with a striking formula, is like the combustion engine invented fifty years before traffic laws: "Technology would have stayed in garages, not from performance defects, but from framework defects."

As prospective: architecture as political act

In eighteen months, the first truly autonomous industrial AI agents, capable of managing entire production campaigns with intermittent human supervision, will be in production at the most advanced companies. In five years, they will be the norm in process industries. The question then is no longer technical. It is profoundly political, in the etymological sense: how do we want to organize power in tomorrow's industrial enterprise? What share of decision-making are we ready to delegate to systems we don't fully understand? How do we build trust—regulatory, social, operational—in architectures where machines decide?

The industrial CIO who doesn't ask these questions today while building his IS foundation will suffer them tomorrow as crises: a poorly attributed incident, floating legal responsibility, internal social resistance that will block the deployment of technically mature solutions.

Purdue's architecture was, fundamentally, a technical response to a political question: who controls the process? It had the merit of clarity. The next industrial architecture will have to answer the same question, in an infinitely more complex world, with infinitely more powerful tools, and an infinitely greater capacity for harm if the answer is poorly formulated.

This is not an engineering problem. It's a problem of industrial civilization. And the CIO, whether he wants it or not, is one of its main architects.

Sources cited: 199A Consulting, "Delegated Decision Assurance. The economic infrastructure that makes the world of operational AI possible," first edition, February 2026, V1.1 (https://199a.agency). The author accompanies mid-sized industrial groups and mid-cap companies in designing their converged IS/OT architectures and governing their industrial AI programs.